The presence of captions and subtitles with digital media files has become more prominent and nearly universal. We often see content creators and distributors contemplate the difference between a Caption Authoring system and a Caption/Subtitle QC system and whether they are both needed. So, we thought it is worthwhile to clarify and differentiate the role each software category provides.

Caption/Subtitle Authoring and Caption/Subtitle QC remain two separate and distinct activities. While caption authoring is used to create captions and subtitles, caption QC is an increasingly important part of any caption/subtitle workflow in order to ensure the optimum end-user experience when viewing captions. The caption QC until recently, had remained a time-consuming and resource intensive manual process. The advent of advanced and automated caption QC software, such as CapMate from Venera Technologies, is now providing a logical alternative to the tedious manual caption QC process, allowing automation of a large portion of this process.

To further clarify and differentiate between caption authoring tools and caption QC tools, we will examine some scenarios to help you appreciate the importance of specialized caption QC systems.

Caption/Subtitle Creation

A common authoring system will let you generate raw captions using ASR (Automated Speech Recognition) technology that provides the first draft of the captions. An operator is required to then add the captions missed by the ASR technology and properly align/format all the captions as needed. Authoring systems may provide basic measurements such as CPS (Characters Per Second), WPM (Words Per Minutes) and CPL (Characters Per Line) that will allow you to rectify the basic ‘timing’ issues. Since the raw captions are generated by the tool itself, they are expected to be aligned with the converted audio. However, the responsibility of aligning any new captions you add lies with you. Authoring tools probably can not provide any analysis capabilities for sync issues on the user added segments. Another common requirement is to ensure that the captions are not placed on top of burnt-in text in the video. Again, you will have to manually ensure that no such overlapping sections exist in the video and an operator will have to watch the entire video content in order to ensure this. The full review of the caption/video is similarly required for many other common issues.

So, while an authoring system allows you to create, edit and format your captions efficiently, it usually doesn’t provide rich analysis capabilities to QC the captions. The responsibility of detecting basic issues and correcting them lies with you.

Caption/Subtitle Compliance

Let’s take this a step further. In today’s world, ensuring the basic sanity of captions is not enough. Every major broadcaster or OTT service provider or educational content provider has its own technical specifications for the captions it requires. There can be many such requirements, a few of which are as follows:

– Max number of lines of caption per screen.

– Minimum and Maximum duration of each caption.

– Captions sync aligned with audio to a maximum specified sub-second threshold.

– All captions to be placed at the bottom third of the screen, while avoiding burnt-in text overlay. In case of overlap, another position may be used.

– Detection of profanity (words defined by the user to be unacceptable)

– Spelling checks.

Caption/Subtitle Editing

So far, we have discussed only the Caption creation scenario. However, a lot of times, an existing caption file needs to be repurposed because of editing in the audio-visual content. Such changes can include the addition of certain video segments, removal of segments, changing caption location based on customer guidelines, or frame rate changes. We have encountered many cases where the customers have been trying to use the original captions with such edited content, which leads to a lot of issues. Detecting and correcting such issues manually can be time-consuming and resource intensive. Since authoring tools do not usually provide auto-analysis capabilities, they can’t help with the detection of such issues. The only way you can use caption authoring systems in this case is to use their user interface and detect/correct such issues manually.

Any compromise in this manual process will lead to missed issues in the content delivered to the customers/content owner. This will effectively mean multiple iterations, causing further delays and affecting customer satisfaction before the captions are accepted by the customer.

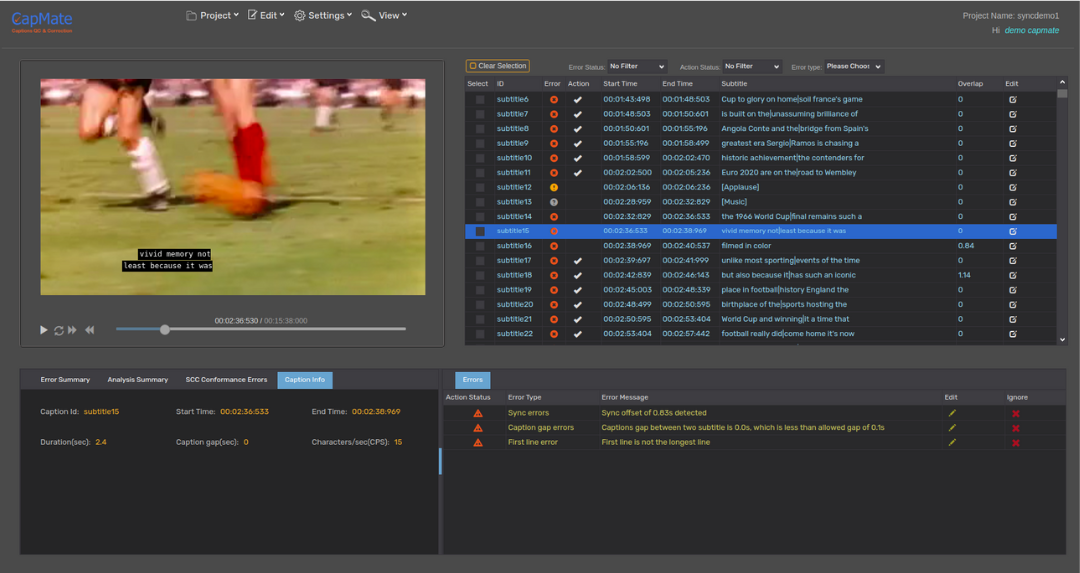

This is where the caption QC tools come in. Caption QC systems address these issues head-on by performing auto-detection (and in case of advanced systems like CapMate, auto-correction) for a wide-range of caption issues. With configurable QC templates, you can set up the checks you needed, define the acceptable thresholds, and let the system do its job. Since the aim of such systems is the analysis, the entire interface is designed to make the analysis and spotting quick & efficient. You only need to act upon the issues reported by the caption QC system. An intelligent caption QC system such as CapMate also provides features to automatically correct many of the issues found, as well as a rich review/editing tool, using which you can easily browse through the reported issues and make the appropriate manual corrections efficiently. They no longer need to watch the entire content.

Not using captions QC tools means that the responsibility of detecting and correcting all captions issues lies with you, which is time-consuming and resource intensive, not to mention error-prone.

While it is understood that the concept of ‘Automated Captions QC & Correction’ is relatively new but adopting such a system can lead to significant business benefits. Our customers who have adopted the use of CapMate into their workflow are benefiting from the efficiencies gained in their caption QC operations from the insights provided by the tool along with its auto-correction abilities.

In conclusion, Caption QC and Caption Authoring tools serve different and complimentary purposes in the caption workflow operation and do their respective jobs in an excellent manner. While Caption QC tools are not intended for caption authoring, Caption Authoring tools are also not well-suited, nor are they intended, for efficient caption QC process. Using both tools judiciously in a workflow can lead to higher quality caption deliveries with more efficient use of the experienced QC operators.

About CapMate™

CapMate™ is a Cloud Native SaaS service for Captions/Subtitles QC and Correction. Whether you are a Captioning service provider, OTT service provider, Broadcaster or a Captioning platform, CapMate can significantly improve your workflow efficiency with its automatic analysis, rich review, spotting, and correction capabilities. Once completed, you can export the finished captions for direct use in production.

Get in touch with us today and we would love to discuss with you how we can help you solve your content QC challenges efficiently!

This content originally appeared here on: https://www.veneratech.com/caption-subtitle-qc-vs-authoring/